In our previous post , we discussed how traditional methods of capturing east-west traffic in the datacenter have become more and more limited due to virtualization. Connecting a TAP to your network or using a SPAN port in order to capture network traffic using a network packet broker is no longer possible in many cases.

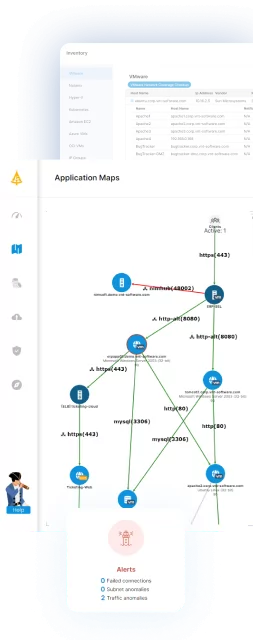

With this in mind, we presented ways to capture full network traffic, including virtual machine traffic, to monitor network performance in a VMware environment. In many cases, however, full network monitoring is not needed. Just knowing who talks to who and how much data is sent between them may be enough for your network visibility needs. In this post, we will explore a different approach to networkvisibility into the east-west traffic in a virtualized datacenter–gathering statistical data on network flows using NetFlow.

Table of Contents

ToggleWhat is NetFlow

NetFlow is a network protocol that was originally developed by Cisco to analyze network traffic. It analyzes packets that are sent over the network and groups them into “flows” which are more or less based on the protocol, access points, source and destination IP addresses, and ports. For each of these flows, NetFlow aggregates basic information on them such as the number of bytes, packets, which TCP headers were sent, etc.

The NetFlow protocol is designed to be as efficient as possible in terms of network bandwidth. It can group many packets into a single flow and also has support for sampling packets meaning it will only analyze 1 out of every X packets that it captures. The NetFlow data is then sent over the network wrapped in UDP packets, each packet with up to 30 flows in it, to a NetFlow Collector. NetFlow Collectors are the components that process the NetFlow packets and decode them so that they can be analyzed.

The most common versions of NetFlow used today are versions 5 and 9. There is also the IPFIX protocol which is based on NetFlow version 9, but is no longer a proprietary Cisco protocol, but an IETF standard. NetFlow is supported by most network routers and also some network switches, and it can also be sent by VMware.

NetFlow in VMware

VMware was built to support the generation of NetFlow traffic from your VMs. This can make it very easy to gain full network visibility into your VMware environment quickly and easily. In the VMware UI and documentation it is called NetFlow, but what VMware actually sends is IPFIX, so make sure your collector supports it. From our experience, enabling NetFlow in VMware has no measurable impact on the performance of the servers, and can be safely enabled without risk.

Note that in order to enable NetFlow support in your environment, you must be using vSphere Distributed Switches (vDS). If you are using standard switches, you cannot generate NetFlow using VMware. You could, however, use promiscuous mode network capture like we discussed in our previous post and then use a tool such as pmacct to generate NetFlow from that traffic.

If your VMware network is based on vDS, then you can enable NetFlow in just 2 simple steps.

Set up your vDS switches to send NetFlow

To enable NetFlow, the first step is to configure the NetFlow settings on the vDS itself. To do this, right click the vDS and under Settings, select Edit NetFlow.

In the NetFlow settings page, we need to specify the following:

- Collector IP address – This is the IP address that the NetFlow packets will be sent to.

- Collector port – The port that the NetFlow packets will be sent to. The default that VMware sets here is 0. This must be changed as it is invalid and you will not receive the packets. The default port for IPFIX as defined in the IETF standard is 4739 so that is a good option to use.

The rest of the settings are optional and not required, but we will go over them:

Observation Domain ID:

This field is used to identify the NetFlow source and the value is set in the corresponding field in the NetFlow packet header.

Switch IP Address:

The switch IP address sets the IP address that will be in the source address field of the IP header for the NetFlow UDP packets. This does not have to be a routable address, but setting it wrong may cause the routing of the NetFlow packets to fail. This can be used to differentiate between the NetFlow sent by different vDSs. If it is left empty, each NetFlow packet sent will have the source vDS IP address set to the management IP address of the ESX host that sent it. If you need to open firewalls to let the NetFlow traffic through, this is the setting you will need to look at.

Idle flow export timeout and Active flow export timeout:

This specifies how much time can pass before a flow is reported to the NetFlow collector. If a flow is still active, it will be reported every 60 seconds by default. A flow that is idle will be reported after 15 seconds by default.

Sampling rate:

The sampling rate can be set to reduce the amount of NetFlow traffic being sent. A sampling rate of 0 specifies that all packets are analyzed while a sampling rate of n specifies that only 1 in X packets will be analyzed.

Process internal flows only:

If this is set to Enable, NetFlow will only be sent for traffic that does not leave the host. You can set this in case you already have a solution for capturing NetFlow on the physical network.

Once the settings are complete, NetFlow will not actually be sent, this just tells VMware where to send NetFlow. In order to actually send the NetFlow data, it needs to be enabled on the port groups.

Enable NetFlow on the Distributed Port Groups

It’s important to edit the settings and enable NetFlow for each distributed port group that needs to receive NetFlow. To do this, right click the port group and select Edit Settings. Then in the Monitoring tab, set NetFlow to Enabled.

This can also be done in bulk by right clicking the vDS and under Distributed Port Group, selecting Manage Distributed Port Groups. There, select the checkbox for Monitoring, and then select the port groups you would like to enable NetFlow for.

Once NetFlow is enabled on a port group, it will send NetFlow data to the collector specified in the settings of the vDS. The port group will only send NetFlow data for packets that are “entering” the port group and not on packets that are “exiting” it. If we look back at our example, if we enable NetFlow on Distributed Port Group 1, we will only get NetFlow for traffic that is sent to VMs 1, 2, 5, and 6. Any traffic sent from those VMs will not have NetFlow data sent for it. In order to collect data for all the traffic, enable NetFlow on all the Distributed Port Groups we want to get NetFlow for, and also for the Uplink port groups. If we do not enable NetFlow for the uplink port groups, we will not get NetFlow for any traffic going out from the VMs to the physical network. Note that in the bulk port group configuration it is not possible to enable NetFlow for the Uplink port group, it must be done

Conclusion

In this post, we explained how to get statistical traffic information on an entire VMware environment quickly and easily, without installing anything on the servers and with negligible network performance impact. The information in NetFlow is limited, and if you need the full packet data, check out our previous post where we showed how to achieve that goal, without blind spots

Of course VMware is not the only platform that supports NetFlow — it is also supported by most modern network routers. But what if you are using Microsoft Hyper-V? We’ll take a look at that in our next post.